People come to TikTok to be creative, find community, and have fun. Being authentic is valued by our community, and we take the responsibility of helping counter inauthentic, misleading, or false content to heart.

We remove misinformation as we identify it and partner with fact checkers at Agence France-Presse (AFP) and Lead Stories to help us assess the accuracy of content. If fact checks confirm content to be false, we’ll remove the video from our platform.

Sometimes fact checks are inconclusive or content is not able to be confirmed, especially during unfolding events. In these cases, a video may become ineligible for recommendation into anyone’s For You feed to limit the spread of potentially misleading information. Today, we’re taking that a step further to inform viewers when we identify a video with unsubstantiated content in an effort to reduce sharing.

Here’s how it works: First, a viewer will see a banner on a video if the content has been reviewed but cannot be conclusively validated.

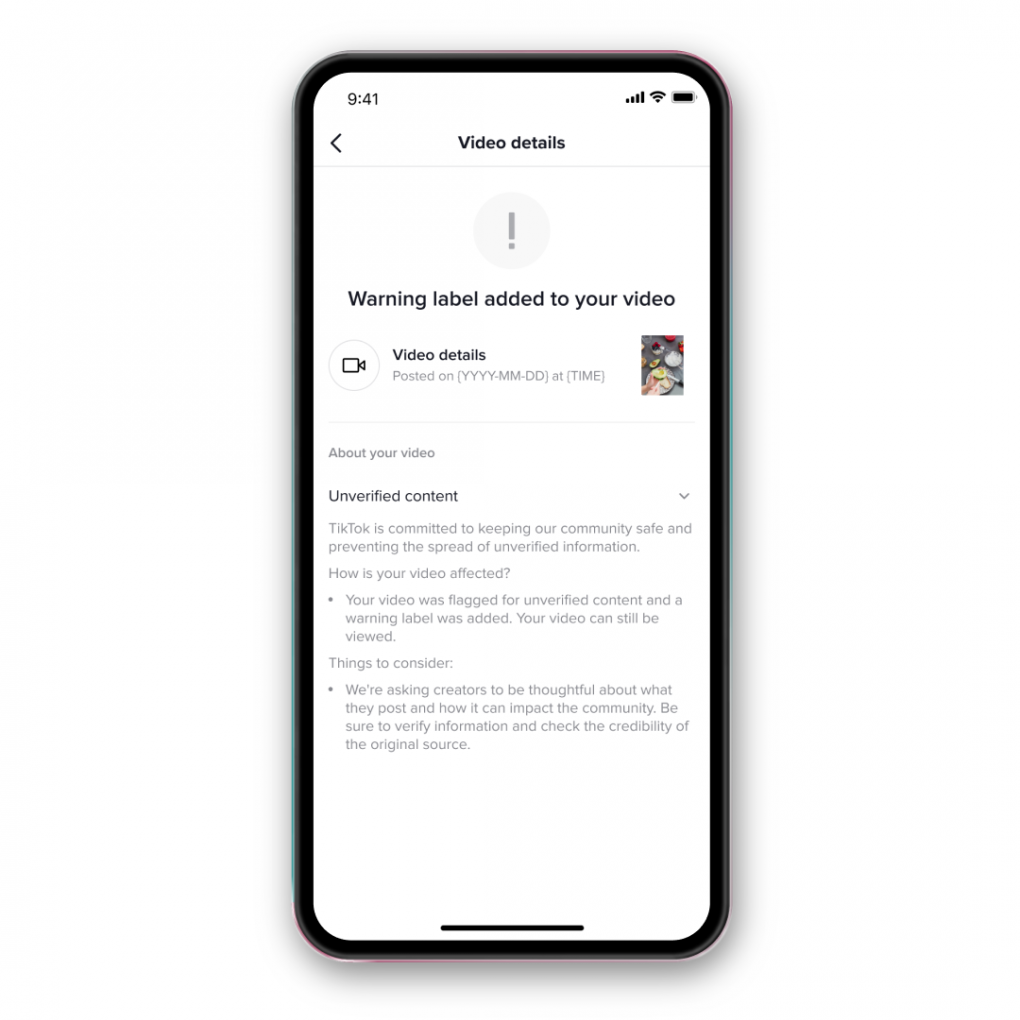

The video’s creator will also be notified that their video was flagged as unsubstantiated content.

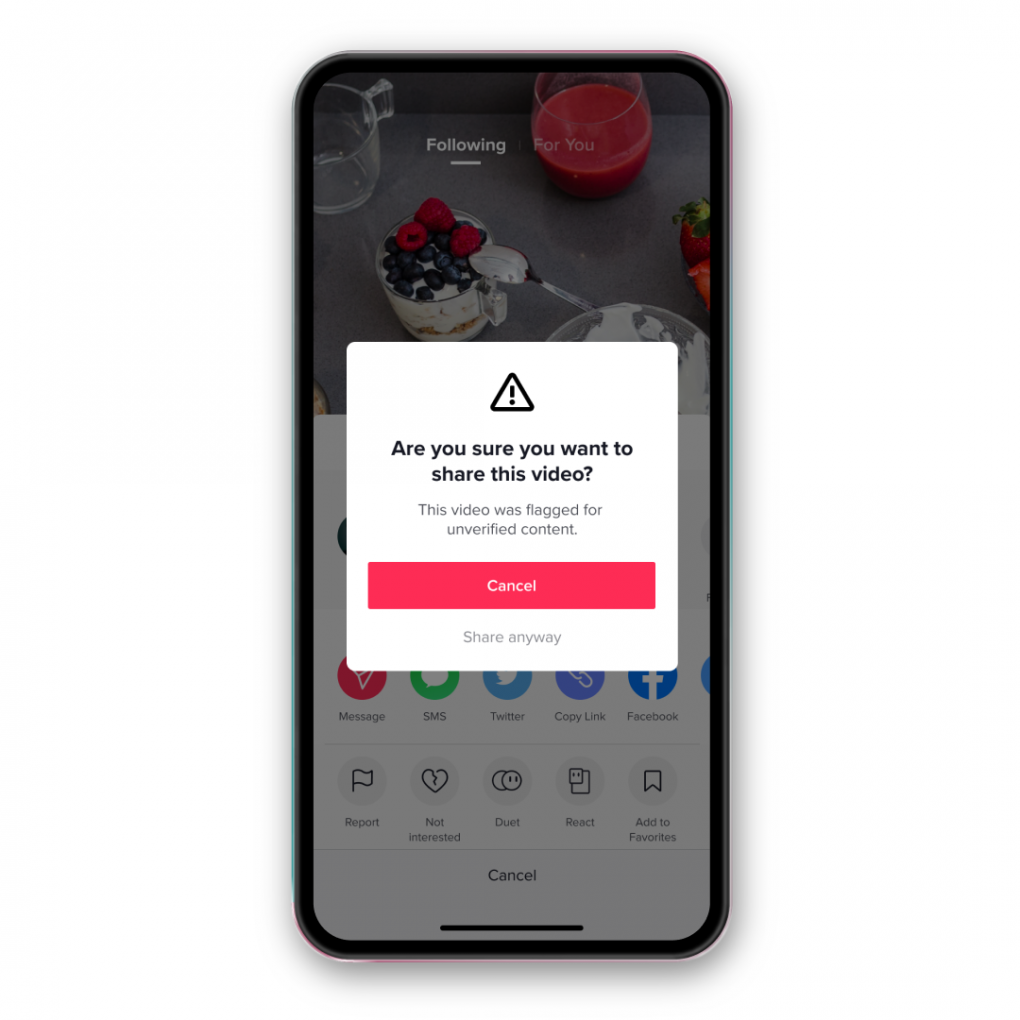

If a viewer attempts to share the flagged video, they’ll see a prompt reminding them that the video has been flagged as unverified content. This additional step requires a pause for people to consider their next move before they choose to “cancel” or “share anyway.”

This feature was designed designed to help users be mindful about what they share. Initially rolled out in the US and Canada and globally over the past few months, it is now available to all PH users beginning March 8, 2020.

Galing naman ni tiktok nag bibigay ng warning sa mga tao lalo na hindi nya pwede ishare ang ginagawa nya

Nice and Good Job tiktok..Tama po ito lalo na puro kabataan ang gumagamit ng tiktok..for their safetiness na din